Amazon S3 is the foundation of modern data lakes — infinitely scalable object storage with pennies-per-GB pricing and tight integration into the AWS analytics stack.

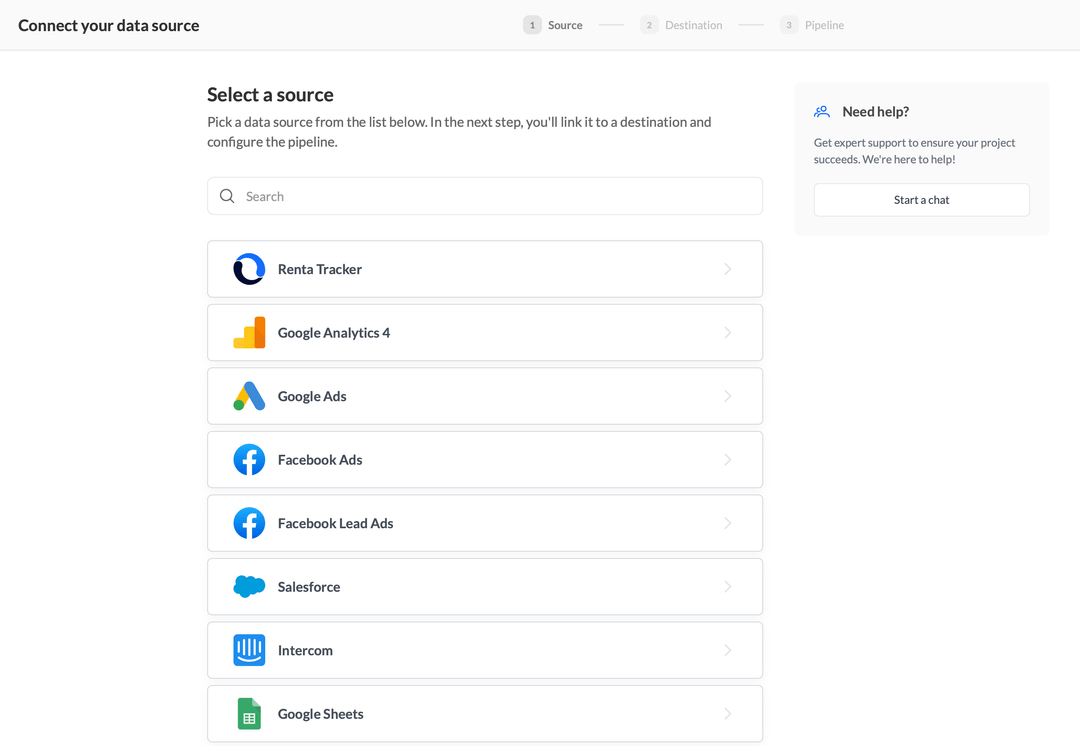

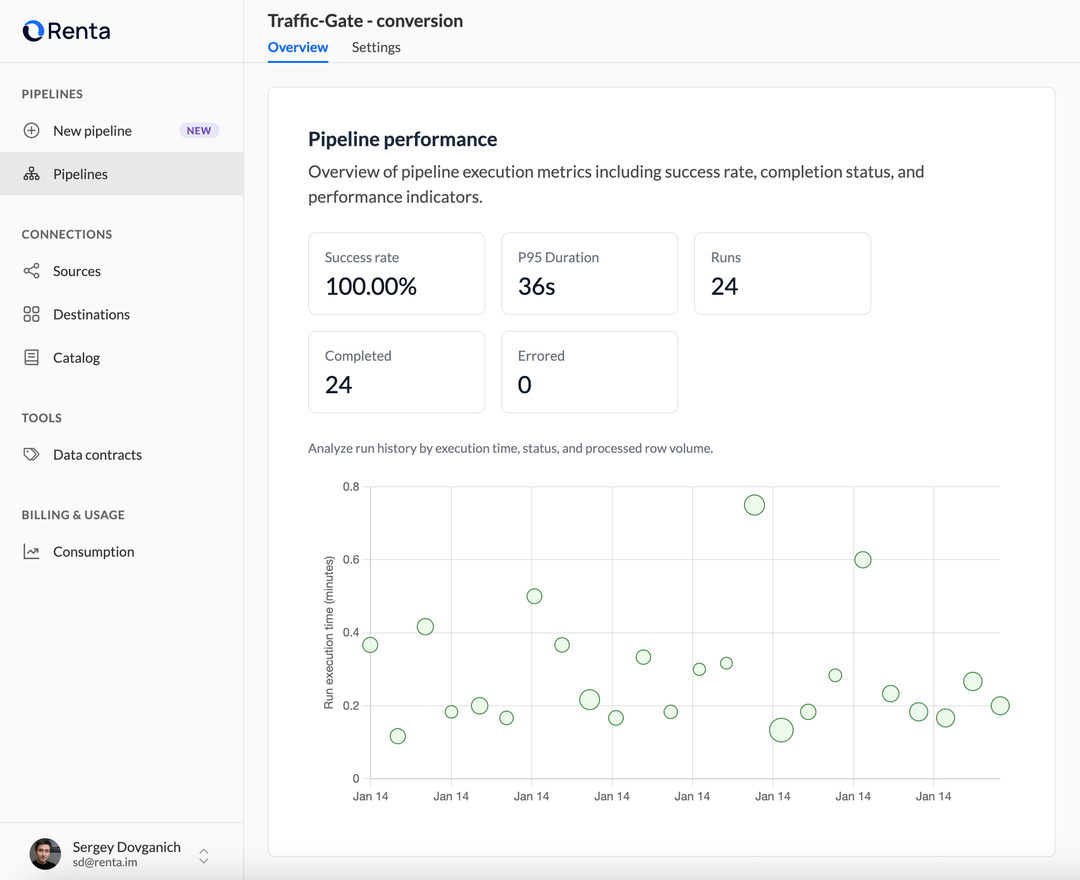

Renta turns S3 into your single source of truth by automatically pulling data from 100+ sources (ad platforms, CRMs, databases, SaaS tools), normalizing the schema and writing partitioned Parquet to your bucket.

Query the same files from Amazon Athena, Redshift Spectrum, EMR, Snowflake external tables, BigQuery external tables, Databricks or Trino — no duplication, no custom loaders, no missed rows.